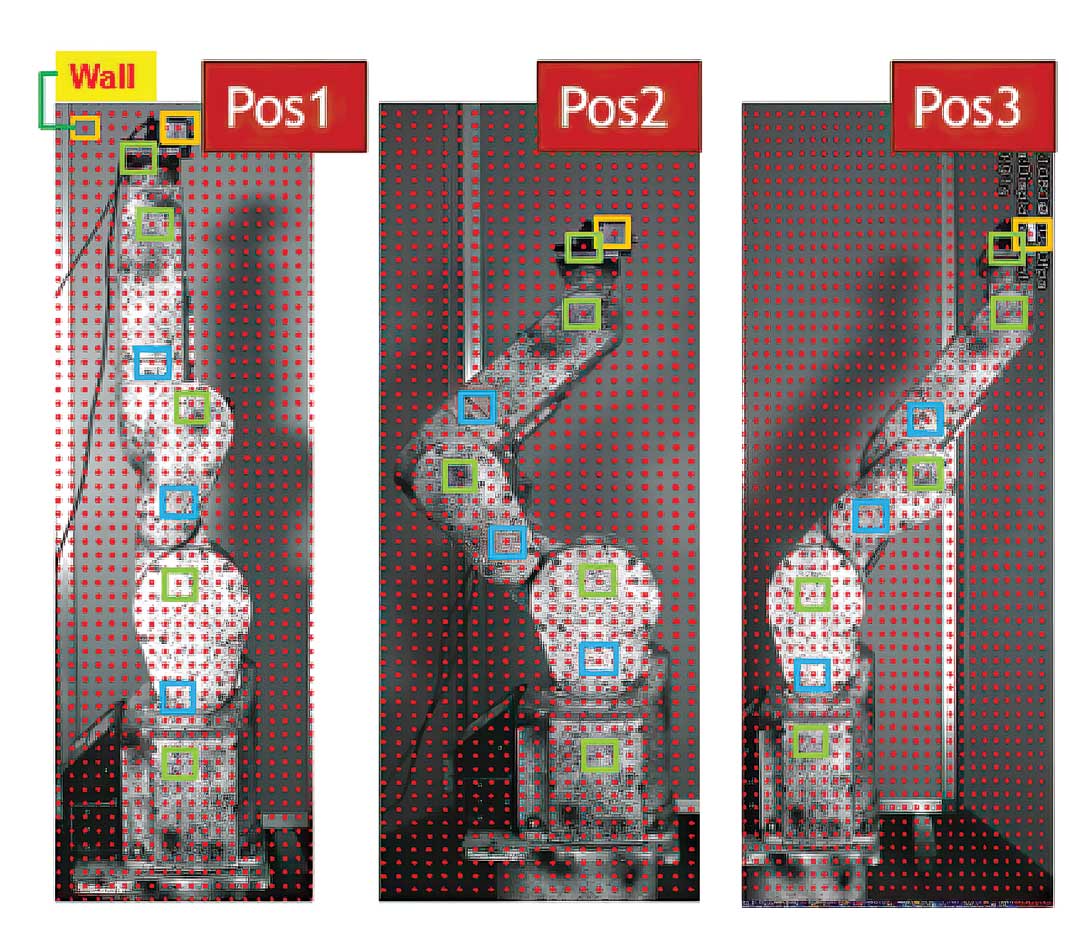

Multi-point DIC measurements of the robot in three operational postures. (Photo courtesy of Hiroshima University)

In modern factories, industrial robots handle a wide range of tasks previously performed by humans, including welding, assembly, painting, material handling, packaging, and quality inspection. Given their importance to production, industrial robots are subject to regular preventive maintenance, ensuring minimal downtime in high-demand environments.

One of the cornerstones of robot maintenance is vibration monitoring. Abnormal vibrations in industrial robots often signal underlying mechanical issues, such as imbalances, loose components, worn bearings, or structural weaknesses. Ultimately, these issues can lead to unexpected shutdowns or slowdowns, costing manufacturers thousands to hundreds of thousands of dollars per hour in lost production. Poor maintenance also contributes to unpredictable robot behavior, creating hazards for workers.

While physical sensors like piezoelectric accelerometers remain highly effective for precise vibration measurement in industrial robots, they come with several notable drawbacks, particularly high costs and adding weight to moving robotic arms that may disrupt normal workflows. Wired sensors also require cables that trail along moving arms, leading to flexing, wear, tangling, or breakage over cycles.

To overcome the limitations of sensor-based techniques, researchers at Hiroshima University (Hiroshima, Japan) have devised a noncontact, multipoint, full-field vibration monitoring system integrating Mikrotron CoaXPress high-speed cameras with Digital Image Correlation (DIC). DIC is a noncontact optical technique used to measure full-field surface deformation, displacement, and strain on materials or structures. Tested confirmed the Hiroshima University system will remotely capture vibrations accurately on a functioning industrial robot while avoiding physical sensor costs and interference.

System Configuration

The Hiroshima University scientists employed a six-degree-of-freedom vertical articulated robot as a measurement target to evaluate their system. After applying a random speckle pattern to the robot, they attached a small vibration exciter.

Image acquisition was performed using a Mikrotron EoSens 2.0CXP2 camera equipped with a 20 mm focal-length lens and mounted 1.2 meters from the robot. The camera features a 2-megapixel CMOS global shutter sensor coupled with a four-lane CoaXPress 2.0 (CXP-12) interface, allowing it to scan moving images at 1,920 x 1,080-pixel resolution while operating at 2,200 frames per second (FPS). For the purpose of this test the camera was configured to provide a spatial resolution of 0.6 mm/pixel, an image size of 1,920 x 1,080 pixels, and a frame rate of 1,000 FPS.

Once captured, video of the moving robot was subsequently divided into individual frames based on the frame rate. Each 1,920 x 1,080 pixel frame was divided into 128 x 128-pixel blocks with a 64-pixel stride, yielding 435 sub-images that simulated virtual vibration sensors. Eight key points representing crucial robot components were chosen for multipoint DIC displacement measurements by applying mask processing to these sub-images.

A vibration visualization algorithm developed in C++ with Microsoft Visual Studio Community 2017 was optimized via an NVIDIA GeForce RTX 2080 GPU. The algorithm tracked changes in the high-contrast speckle pattern applied to the robot, generating detailed 2D maps of how the surface moved due to vibrations.

To ensure consistent test measurements, three representative postures were selected based on the robot's actual operating cycle: (1) the home position, (2) an intermediate position where the arm extends and experiences maximum load and joint motion, and (3) the final position where the arm returns to the home configuration. For each posture, the first frame was used as the reference image, and all subsequent frames served as input images to capture the displacement of each robot component. The Mikrotron camera simultaneously captured the moving robot and a fixed background wall within the same frame.

Results of Testing

Analyzed results showed that the Hiroshima University vibration monitoring system captured simultaneous measurements across the moving robot's structure, accurately detecting both high and low frequency vibrations. This level of accuracy is challenging to achieve using conventional sensor-based techniques. High computing power achieved efficient evaluation of vibration dispersion and real-time vibration visualization across the robot and provided a platform for early detection of abnormal behaviors.

According to Hiroshima University, future work will focus on enhancing the robustness and practical applicability of the proposed approach. Plans call for it to be extended to real-time vibration monitoring during active robot motion, enabling more accurate characterization of operational dynamics. Additional robot configurations will be tested, and the researchers may employ multicamera stereo imaging to enable full 3D vibration analysis.

Allied Vision unites the machine vision brands Allied Vision, Chromasens, Mikrotron, NET, and SVS-Vistek under one brand, offering customer-centric, integrated hardware and software solutions.

For more information contact:

Allied Vision Technologies Inc.

102 Pickering Way, Ste. 502

Exton, PA 19341

978-225-2030

sales.americas@alliedvision.com

www.alliedvision.com